|

Feel free to skip this section if you’ve already installed Java. You need to install Java to run Spark code. This post will show you how to pin PySpark and Delta Lake versions when creating your environment, to ensure they are compatible.Ĭreating a local PySpark / Delta Lake / Jupyter setup can be a bit tricky, but you’ll find it easy by following the steps in this guide. You also need to pay close attention when setting your PySpark and Delta Lake versions, as they must be compatible. This guide will teach you how to install Java with a package manager that lets you easily switch between Java versions. In order to run PySpark, you need to install Java.

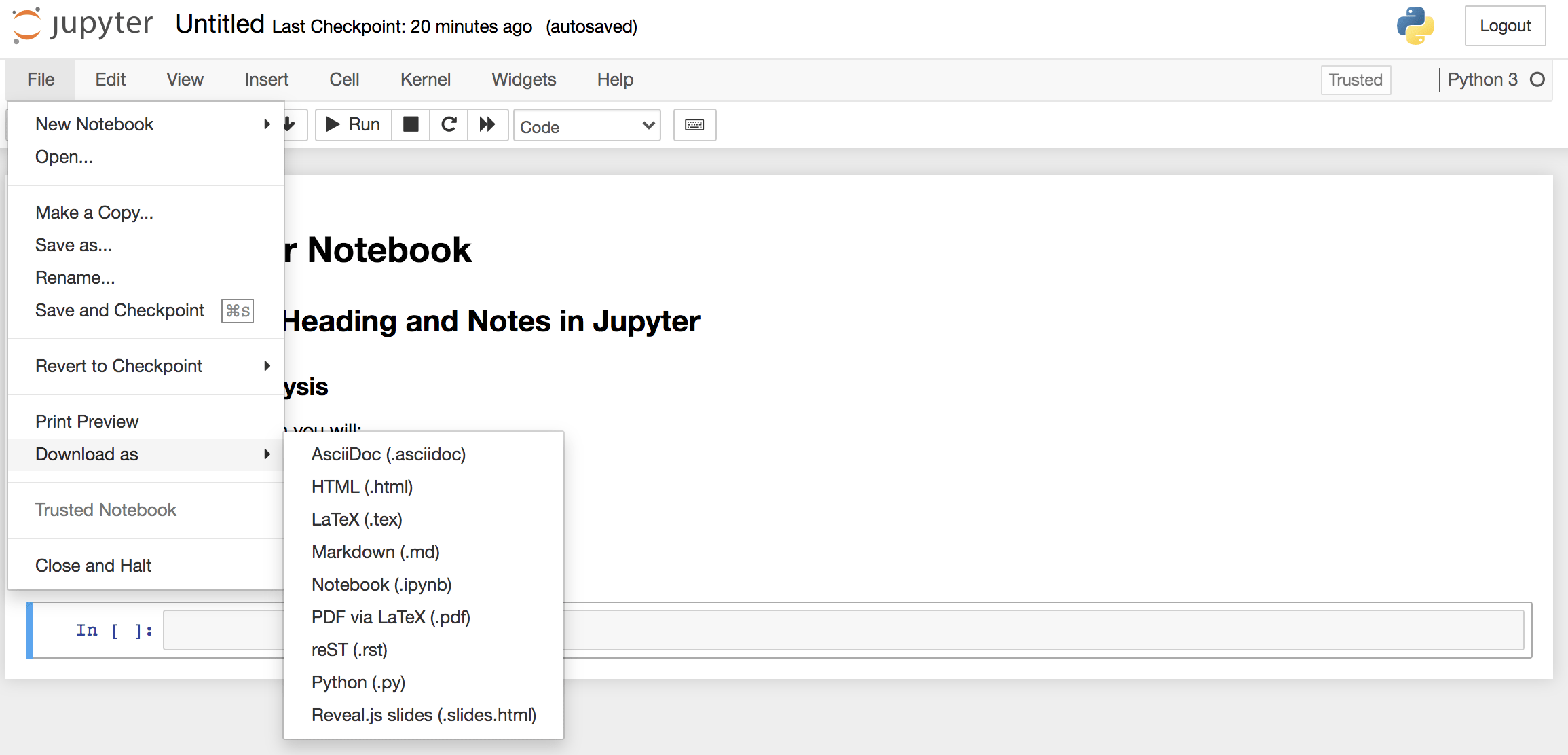

This setup will let you easily run Delta Lake computations on your local machine in a Jupyter notebook for experimentation or to unit test your business logic. This blog post explains how to install PySpark, Delta Lake, and Jupyter Notebooks on a Mac.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed